We should be a lot more worried about the DoD / Anthropic fiasco

In the last couple of weeks, a power war has been brewing between the U.S. Department of Defense and Anthropic, the company that makes the Claude chatbot.

The gist of the situation is this:

In July 2025, Anthropic, OpenAI, xAI and Google were awarded contracts of up to $200 million with the U.S. DoD. The Pentagon particularly took a liking to Claude, noting it as ‘the most advanced and secure model for sensitive military applications’, NPR reports, and deployed the models on Palantir systems.

But the Pentagon’s use of Claude is subject to the same usage policy as everyone else. This includes, among other things:

Do Not Develop or Design Weapons

Do Not Undermine Democratic Processes or Engage in Targeted Campaign Activities

Do Not Use for Criminal Justice, Censorship, Surveillance, or Prohibited Law Enforcement Purposes

This seems good, right? While the moral structure of any AI company is deeply murky at best, it’s at least nice that Anthropic tries to lay out the lines they say they won’t cross.

Then the U.S. raided Venezuela, removing Nicolás Maduro from power.

The department used Anthropic’s models during the raid, accessing them through Palantir’s technology stack. Afterwards, the Wall Street Journal reported that this action spurred Anthropic executives to question exactly how Claude was used in the raid, angering U.S. Department of War Defense chief Pete Hegseth, who later stated the DoD would not use AI models ‘that won’t allow you to fight wars’.

Whether the Maduro raid had any effect on Anthropic executive’s decision making at all is up for speculation, considering Anthropic CEO Dario Amodei has been consistent on his willingness to work with the DoD save for specific conditions, particularly that it doesn’t use its models for fully autonomous weapons or to deploy mass surveillance systems on Americans.

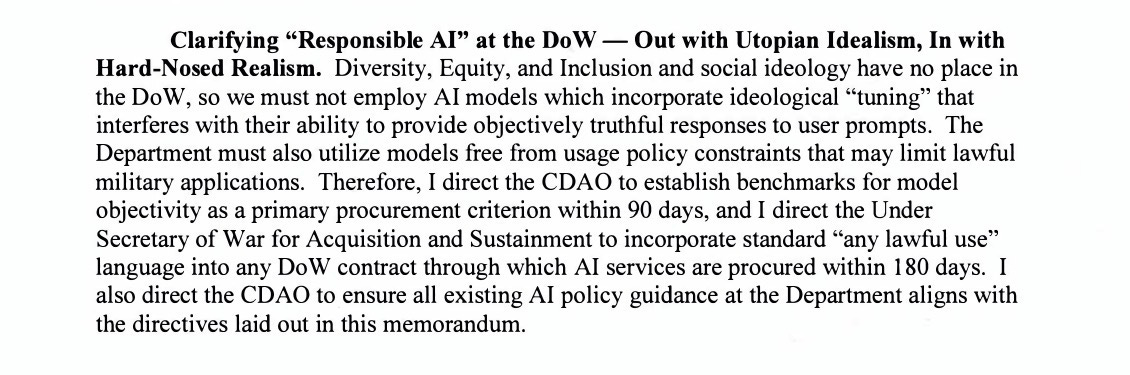

DoD chief Pete Hegseth then issued its memo ‘Artificial Intelligence Strategy For the Department of War’, dated January 9th 2026 - 6 days after the raid. In the statement, it stated it would only work with companies going forward that gave it unfettered access for ‘any lawful use’ of its technologies.

Anthropic pushed back, refusing to budge on its constitution. It took particular issue with the department wanting to use its systems for autonomous weapons and surveillance of U.S. citizens.

The Pentagon then said it was ‘reevaluating’ its relationship with Anthropic, who bent over backwards to try and appease Hegseth. This included appointing Chris Liddell, former Trump administration Chief of Staff to its board, as well as removing a policy committing to never train models without first guaranteeing certain safety precautions. But as most companies eventually find out, you can do as many favors as you want for this government and it often won’t matter.

Such ‘reevaluation’ actions could include canceling the awarded $200 million, labeling Anthropic a ‘Supply Chain Risk’, severely limiting the companies Anthropic is legally allowed to do business with, or even invoking the Defense Production Act of 1950, potentially forcing Anthropic to build a custom version of Claude with unfettered capabilities.

Considering the history of this administration I think you can easily see where this is going. This administration does not follow the law. It simply makes up its own laws, or twists existing laws to do its bidding. Anthropic could sue, but to put in context how long it can take for the Supreme Court to make up its mind, it took 324 days and $1,700 per U.S. household for the Supreme Court to rule the regime’s IEEPA tariffs unconstitutional. And it’s unlikely anyone is getting that money back any time soon. Even if Anthropic eventually won in court, a year is a hell of a long time for this government to have unfettered access to these systems. It will be used for autonomous weapons, and it will be used to drastically accelerate the mass surveillance we are currently living under. And if the government tries to use the DPA to force Anthropic to hand over Claude’s model weights, it’s unlikely it would stop using the system even if it lost legally.

Additionally, if the U.S. government labels Anthropic a Supply Chain Risk, it creates a funding vacuum in Anthropic’s massive spending commitments such as the $50 billion in AI infrastructure it announced in November, and probably worse, Anthropic would be cut off from Amazon, Google, and others as cloud providers and investors, effectively kneecapping its ability to exist at all, depending on how it’s implemented.

I have to point out the immense irony here. The Pentagon chose Claude in the first place because of Anthropic’s constitution, which has a particular focus on alignment and eliminating potential AI hallucinations. Something like this is exactly the thing that makes Claude more stable and secure, but now its trying to do everything it can to get Anthropic to remove that constitution.

If you’re wondering, ‘why doesn’t the DoD just go to the other AI companies lined up to give it unfettered access to their technologies?’, my guess is that the Pentagon has already integrated Claude into its air gapped networks with Palantir’s IL6 accreditation, which could take months to switch over if they wanted to use GPT-5, Grok, or Gemini instead. Though, we know xAI is foaming at the mouth waiting for the opportunity regardless.

The way forward seems pretty bad! If any of this is going to be prevented, Congress needs to amend the Defense Production Act of 1950 so it can’t be used to seize intellectual property for the purpose of bypassing safety and ethical guardrails. Unfortunately, that has proven to take a hell of a lot of time, and with a deadline looming, (and possibly even passed by the time you’re reading this) things could get very bad, very quickly.

At 4pm PT today, Anthropic CEO Dario Amodei issued a statement signaling that it does not plan to back down, and will work with the DoD to transition to another provider if that’s what they wish. It’s interesting to see such a public rebuke of the pentagon’s intentions, I’m happy to see Anthropic stick to their values, but I do worry that other companies would be quick to ignore them.

As a friend of mine often says, there are many moments in history where we should have stopped and said “we should have done something about this”. This is certainly one of those times.